Introduction

A few years ago, I struggled to improve my land cover classification results. It was a time when I was obsessed with trying the latest shiny or advanced machine learning models to improve land cover classification. I invested a lot of time and effort in learning how to run new and advanced machine learning models in python or R. Yet, time and time again, the results were disappointing despite reading about successful use cases in primary remote sensing and GIS journals. Yes, I was caught up in machine learning hype and how it will transform geospatial data analysis.

So what was I doing wrong? Why was I struggling? Eventually, I realized that my mindset was fixated on applying the shiny and advanced machine learning models in fashion those days. So I took a step back. I started performing land cover classification using a simple machine learning model such as k nearest neighbor (KNN). And guess what? The land cover classification results were almost similar or even better than the advanced machine learning models. From that moment, I realized that there was more to just tuning or optimizing advanced machine learning models. While it sounds like common sense, some researchers or students focus only on the new machine or deep learning models.

Why Explainable Machine Learning?

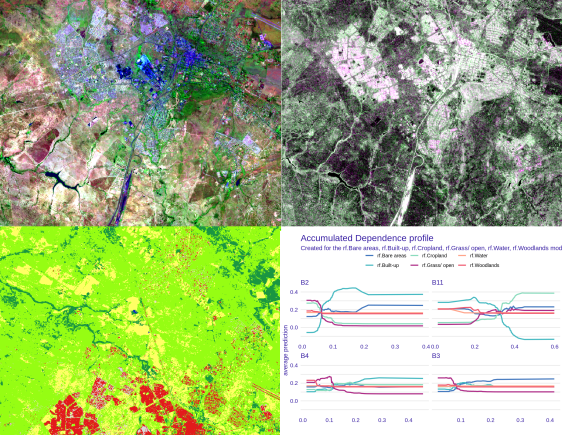

I had to go back to the basics. I started by understanding the classification problem at hand and the geography of the study area. I looked at land cover class definitions at the appropriate scale of analysis and selected relevant reference data, satellite imagery, and ancillary data. I began to focus on compiling reliable training sample data. Finally, I could build simple and advanced machine learning models, tune model parameters, perform cross-validation, and evaluate the models. I also got interested in land cover classification uncertainty and errors, leading to explainable machine learning. I focused on understanding a machine learning model’s underlying mechanisms, biases, and errors.

Recently, researchers have developed methods to address the complexity and explainability of machine learning models. But what is explainable machine learning? How does it help to improve land cover mapping results? Explainable machine learning refers to how analysts can explain the underlying mechanism of a machine learning model. That is, explainable machine learning models allow us (humans) to explain what the model learned and how it made predictions (post-hoc). For example, explainable machine learning can give us insight into the algorithms used for land cover classification. This insight can enable us to understand how the algorithm works and assign classes to pixels.

Data-centric Explainable Machine Learning

Over the years, many remote sensing researchers and analysts have focused on improving or tuning the machine learning algorithms. In most cases, remote sensing researchers applied the machine learning algorithms in developed countries with quality reference data sets (e.g., field data, aerial photographs, and high-resolution satellite imagery). However, there is a lack of reference data sets in most developing countries. Therefore, remote sensing researchers and analysts should often spend more time and effort creating quality reference (training and validation) data and looking for appropriate satellite imagery and ancillary data. This data-centric explainable machine learning approach will systematically improve reference data quality to enhance machine learning models’ accuracy, generalizability, and accountability.

Next Steps

Land cover classification remains challenging. However, more very high-resolution images are available to create quality reference data. In addition, efforts to improve transparency and accountability in the machine learning model are becoming an important research topic. I have published a book on ‘Data-centric Explainable Machine Learning for Land Cover Classification: A Practical Guide in R.’ The book is for those interested in improving land cover classification using a data-centric explainable machine learning approach. If you want to learn more about the book, please check the information at:

Data-centric Explainable Machine Learning